What is Lean

Lean is a functional programming language that makes it easy to write correct and maintainable code. You can also use Lean as an interactive theorem prover.

Lean programming primarily involves defining types and functions. This allows your focus to remain on the problem domain and manipulating its data, rather than the details of programming.

-- Defines a function that takes a name and produces a greeting.

def getGreeting (name : String) := s!"Hello, {name}! Isn't Lean great?"

-- The `main` function is the entry point of your program.

-- Its type is `IO Unit` because it can perform `IO` operations (side effects).

def main : IO Unit :=

-- Define a list of names

let names := ["Sebastian", "Leo", "Daniel"]

-- Map each name to a greeting

let greetings := names.map getGreeting

-- Print the list of greetings

for greeting in greetings do

IO.println greeting

Lean has numerous features, including:

- Type inference

- First-class functions

- Powerful data types

- Pattern matching

- Type classes

- Monads

- Extensible syntax

- Hygienic macros

- Dependent types

- Metaprogramming

- Multithreading

- Verification: you can prove properties of your functions using Lean itself

Tour of Lean

The best way to learn about Lean is to read and write Lean code. This article will act as a tour through some of the key features of the Lean language and give you some code snippets that you can execute on your machine. To learn about setting up a development environment, check out Setting Up Lean.

There are two primary concepts in Lean: functions and types. This tour will emphasize features of the language which fall into these two concepts.

Functions and Namespaces

The most fundamental pieces of any Lean program are functions organized into namespaces.

Functions perform work on inputs to produce outputs,

and they are organized under namespaces,

which are the primary way you group things in Lean.

They are defined using the def command,

which give the function a name and define its arguments.

namespace BasicFunctions

-- The `#eval` command evaluates an expression on the fly and prints the result.

#eval 2+2

-- You use 'def' to define a function. This one accepts a natural number

-- and returns a natural number.

-- Parentheses are optional for function arguments, except for when

-- you use an explicit type annotation.

-- Lean can often infer the type of the function's arguments.

def sampleFunction1 x := x*x + 3

-- Apply the function, naming the function return result using 'def'.

-- The variable type is inferred from the function return type.

def result1 := sampleFunction1 4573

-- This line uses an interpolated string to print the result. Expressions inside

-- braces `{}` are converted into strings using the polymorphic method `toString`

#eval println! "The result of squaring the integer 4573 and adding 3 is {result1}"

-- When needed, annotate the type of a parameter name using '(argument : type)'.

def sampleFunction2 (x : Nat) := 2*x*x - x + 3

def result2 := sampleFunction2 (7 + 4)

#eval println! "The result of applying the 2nd sample function to (7 + 4) is {result2}"

-- Conditionals use if/then/else

def sampleFunction3 (x : Int) :=

if x > 100 then

2*x*x - x + 3

else

2*x*x + x - 37

#eval println! "The result of applying sampleFunction3 to 2 is {sampleFunction3 2}"

end BasicFunctions

-- Lean has first-class functions.

-- `twice` takes two arguments `f` and `a` where

-- `f` is a function from natural numbers to natural numbers, and

-- `a` is a natural number.

def twice (f : Nat → Nat) (a : Nat) :=

f (f a)

-- `fun` is used to declare anonymous functions

#eval twice (fun x => x + 2) 10

-- You can prove theorems about your functions.

-- The following theorem states that for any natural number `a`,

-- adding 2 twice produces a value equal to `a + 4`.

theorem twiceAdd2 (a : Nat) : twice (fun x => x + 2) a = a + 4 :=

-- The proof is by reflexivity. Lean "symbolically" reduces both sides of the equality

-- until they are identical.

rfl

-- `(· + 2)` is syntax sugar for `(fun x => x + 2)`. The parentheses + `·` notation

-- is useful for defining simple anonymous functions.

#eval twice (· + 2) 10

-- Enumerated types are a special case of inductive types in Lean,

-- which we will learn about later.

-- The following command creates a new type `Weekday`.

inductive Weekday where

| sunday : Weekday

| monday : Weekday

| tuesday : Weekday

| wednesday : Weekday

| thursday : Weekday

| friday : Weekday

| saturday : Weekday

-- `Weekday` has 7 constructors/elements.

-- The constructors live in the `Weekday` namespace.

-- Think of `sunday`, `monday`, …, `saturday` as being distinct elements of `Weekday`,

-- with no other distinguishing properties.

-- The command `#check` prints the type of a term in Lean.

#check Weekday.sunday

#check Weekday.monday

-- The `open` command opens a namespace, making all declarations in it accessible without

-- qualification.

open Weekday

#check sunday

#check tuesday

-- You can define functions by pattern matching.

-- The following function converts a `Weekday` into a natural number.

def natOfWeekday (d : Weekday) : Nat :=

match d with

| sunday => 1

| monday => 2

| tuesday => 3

| wednesday => 4

| thursday => 5

| friday => 6

| saturday => 7

#eval natOfWeekday tuesday

def isMonday : Weekday → Bool :=

-- `fun` + `match` is a common idiom.

-- The following expression is syntax sugar for

-- `fun d => match d with | monday => true | _ => false`.

fun

| monday => true

| _ => false

#eval isMonday monday

#eval isMonday sunday

-- Lean has support for type classes and polymorphic methods.

-- The `toString` method converts a value into a `String`.

#eval toString 10

#eval toString (10, 20)

-- The method `toString` converts values of any type that implements

-- the class `ToString`.

-- You can implement instances of `ToString` for your own types.

instance : ToString Weekday where

toString (d : Weekday) : String :=

match d with

| sunday => "Sunday"

| monday => "Monday"

| tuesday => "Tuesday"

| wednesday => "Wednesday"

| thursday => "Thursday"

| friday => "Friday"

| saturday => "Saturday"

#eval toString (sunday, 10)

def Weekday.next (d : Weekday) : Weekday :=

match d with

| sunday => monday

| monday => tuesday

| tuesday => wednesday

| wednesday => thursday

| thursday => friday

| friday => saturday

| saturday => sunday

#eval Weekday.next Weekday.wednesday

-- Since the `Weekday` namespace has already been opened, you can also write

#eval next wednesday

-- Matching on a parameter like in the previous definition

-- is so common that Lean provides syntax sugar for it. The following

-- function uses it.

def Weekday.previous : Weekday -> Weekday

| sunday => saturday

| monday => sunday

| tuesday => monday

| wednesday => tuesday

| thursday => wednesday

| friday => thursday

| saturday => friday

#eval next (previous wednesday)

-- We can prove that for any `Weekday` `d`, `next (previous d) = d`

theorem Weekday.nextOfPrevious (d : Weekday) : next (previous d) = d :=

match d with

| sunday => rfl

| monday => rfl

| tuesday => rfl

| wednesday => rfl

| thursday => rfl

| friday => rfl

| saturday => rfl

-- You can automate definitions such as `Weekday.nextOfPrevious`

-- using metaprogramming (or "tactics").

theorem Weekday.nextOfPrevious' (d : Weekday) : next (previous d) = d := by

cases d -- A proof by case distinction

all_goals rfl -- Each case is solved using `rfl`

Quickstart

These instructions will walk you through setting up Lean 4 together with VS Code as an editor for Lean 4. See Setup for supported platforms and other ways to set up Lean 4.

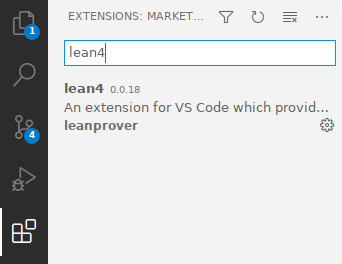

-

Install VS Code.

-

Launch VS Code and install the

lean4extension by clicking on the "Extensions" sidebar entry and searching for "lean4".

-

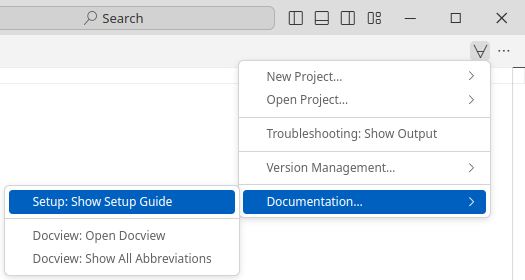

Open the Lean 4 setup guide by creating a new text file using "File > New Text File" (

Ctrl+N), clicking on the ∀-symbol in the top right and selecting "Documentation… > Setup: Show Setup Guide".

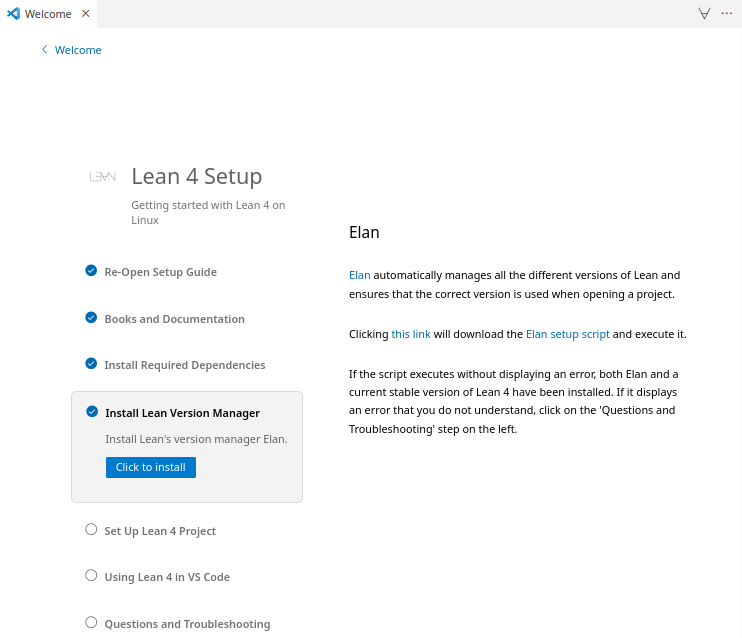

-

Follow the Lean 4 setup guide. It will walk you through learning resources for Lean 4, teach you how to set up Lean's dependencies on your platform, install Lean 4 for you at the click of a button and help you set up your first project.

Supported Platforms

Tier 1

Platforms built & tested by our CI, available as binary releases via elan (see below)

- x86-64 Linux with glibc 2.27+

- x86-64 macOS 10.15+

- aarch64 (Apple Silicon) macOS 10.15+

- x86-64 Windows 10+

Tier 2

Platforms cross-compiled but not tested by our CI, available as binary releases

Releases may be silently broken due to the lack of automated testing. Issue reports and fixes are welcome.

- aarch64 Linux with glibc 2.27+

- x86 (32-bit) Linux

- Emscripten Web Assembly

Setting Up Lean

See also the quickstart instructions for a standard setup with VS Code as the editor.

Release builds for all supported platforms are available at https://github.com/leanprover/lean4/releases.

Instead of downloading these and setting up the paths manually, however, it is recommended to use the Lean version manager elan instead:

$ elan self update # in case you haven't updated elan in a while

# download & activate latest Lean 4 stable release (https://github.com/leanprover/lean4/releases)

$ elan default leanprover/lean4:stable

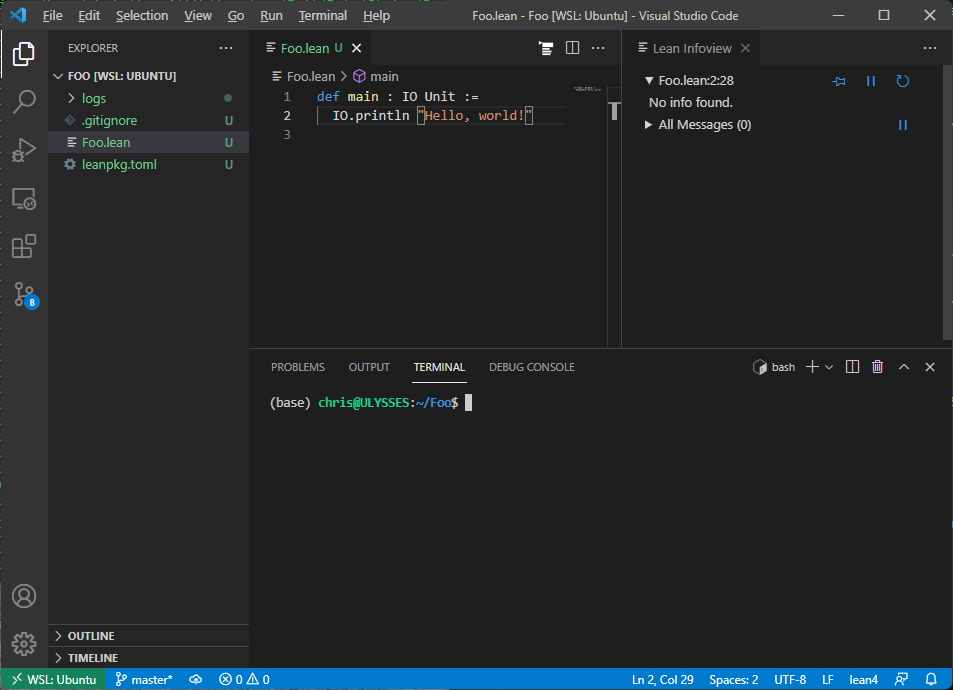

lake

Lean 4 comes with a package manager named lake.

Use lake init foo to initialize a Lean package foo in the current directory, and lake build to typecheck and build it as well as all its dependencies. Use lake help to learn about further commands.

The general directory structure of a package foo is

lakefile.lean # package configuration

lean-toolchain # specifies the lean version to use

Foo.lean # main file, import via `import Foo`

Foo/

A.lean # further files, import via e.g. `import Foo.A`

A/... # further nesting

.lake/ # `lake` build output directory

After running lake build you will see a binary named ./.lake/build/bin/foo and when you run it you should see the output:

Hello, world!

Editing

Lean implements the Language Server Protocol that can be used for interactive development in Emacs, VS Code, and possibly other editors.

Changes must be saved to be visible in other files, which must then be invalidated using an editor command (see links above).

Theorem Proving in Lean

We strongly encourage you to read the book Theorem Proving in Lean. Many Lean users consider it to be the Lean Bible.

Functional Programming in Lean

The goal of this book is to be an accessible introduction to using Lean 4 as a programming language. It should be useful both to people who want to use Lean as a general-purpose programming language and to mathematicians who want to develop larger-scale proof automation but do not have a background in functional programming. It does not assume any background with functional programming, though it's probably not a good first book on programming in general. New content will be added once per month until it's done.

Examples

- Palindromes

- Binary Search Trees

- A Certified Type Checker

- The Well-Typed Interpreter

- Dependent de Bruijn Indices

- Parametric Higher-Order Abstract Syntax

(this example is rendered by Alectryon in the CI)

/-!

# Palindromes

Palindromes are lists that read the same from left to right and from right to left.

For example, `[a, b, b, a]` and `[a, h, a]` are palindromes.

We use an inductive predicate to specify whether a list is a palindrome or not.

Recall that inductive predicates, or inductively defined propositions, are a convenient

way to specify functions of type `... → Prop`.

This example is a based on an example from the book "The Hitchhiker's Guide to Logical Verification".

-/

inductive Palindrome : List α → Prop where

| nil : Palindrome []

| single : (a : α) → Palindrome [a]

| sandwich : (a : α) → Palindrome as → Palindrome ([a] ++ as ++ [a])

/-!

The definition distinguishes three cases: (1) `[]` is a palindrome; (2) for any element

`a`, the singleton list `[a]` is a palindrome; (3) for any element `a` and any palindrome

`[b₁, . . ., bₙ]`, the list `[a, b₁, . . ., bₙ, a]` is a palindrome.

-/

/-!

We now prove that the reverse of a palindrome is a palindrome using induction on the inductive predicate `h : Palindrome as`.

-/

theorem palindrome_reverse (h : Palindrome as) : Palindrome as.reverse := by

induction h with

| nil => exact Palindrome.nil

| single a => exact Palindrome.single a

| sandwich a h ih => simp; exact Palindrome.sandwich _ ih

/-! If a list `as` is a palindrome, then the reverse of `as` is equal to itself. -/

theorem reverse_eq_of_palindrome (h : Palindrome as) : as.reverse = as := by

induction h with

| nil => rfl

| single a => rfl

| sandwich a h ih => simp [ih]

/-! Note that you can also easily prove `palindrome_reverse` using `reverse_eq_of_palindrome`. -/

example (h : Palindrome as) : Palindrome as.reverse := by

simp [reverse_eq_of_palindrome h, h]

/-!

Given a nonempty list, the function `List.last` returns its element.

Note that we use `(by simp)` to prove that `a₂ :: as ≠ []` in the recursive application.

-/

def List.last : (as : List α) → as ≠ [] → α

| [a], _ => a

| _::a₂:: as, _ => (a₂::as).last (by simp)

/-!

We use the function `List.last` to prove the following theorem that says that if a list `as` is not empty,

then removing the last element from `as` and appending it back is equal to `as`.

We use the attribute `@[simp]` to instruct the `simp` tactic to use this theorem as a simplification rule.

-/

@[simp] theorem List.dropLast_append_last (h : as ≠ []) : as.dropLast ++ [as.last h] = as := by

match as with

| [] => contradiction

| [a] => simp_all [last, dropLast]

| a₁ :: a₂ :: as =>

simp [last, dropLast]

exact dropLast_append_last (as := a₂ :: as) (by simp)

/-!

We now define the following auxiliary induction principle for lists using well-founded recursion on `as.length`.

We can read it as follows, to prove `motive as`, it suffices to show that: (1) `motive []`; (2) `motive [a]` for any `a`;

(3) if `motive as` holds, then `motive ([a] ++ as ++ [b])` also holds for any `a`, `b`, and `as`.

Note that the structure of this induction principle is very similar to the `Palindrome` inductive predicate.

-/

theorem List.palindrome_ind (motive : List α → Prop)

(h₁ : motive [])

(h₂ : (a : α) → motive [a])

(h₃ : (a b : α) → (as : List α) → motive as → motive ([a] ++ as ++ [b]))

(as : List α)

: motive as :=

match as with

| [] => h₁

| [a] => h₂ a

| a₁::a₂::as' =>

have ih := palindrome_ind motive h₁ h₂ h₃ (a₂::as').dropLast

have : [a₁] ++ (a₂::as').dropLast ++ [(a₂::as').last (by simp)] = a₁::a₂::as' := by simp

this ▸ h₃ _ _ _ ih

termination_by as.length

/-!

We use our new induction principle to prove that if `as.reverse = as`, then `Palindrome as` holds.

Note that we use the `using` modifier to instruct the `induction` tactic to use this induction principle

instead of the default one for lists.

-/

theorem List.palindrome_of_eq_reverse (h : as.reverse = as) : Palindrome as := by

induction as using palindrome_ind

next => exact Palindrome.nil

next a => exact Palindrome.single a

next a b as ih =>

have : a = b := by simp_all

subst this

have : as.reverse = as := by simp_all

exact Palindrome.sandwich a (ih this)

/-!

We now define a function that returns `true` iff `as` is a palindrome.

The function assumes that the type `α` has decidable equality. We need this assumption

because we need to compare the list elements.

-/

def List.isPalindrome [DecidableEq α] (as : List α) : Bool :=

as.reverse = as

/-!

It is straightforward to prove that `isPalindrome` is correct using the previously proved theorems.

-/

theorem List.isPalindrome_correct [DecidableEq α] (as : List α) : as.isPalindrome ↔ Palindrome as := by

simp [isPalindrome]

exact Iff.intro (fun h => palindrome_of_eq_reverse h) (fun h => reverse_eq_of_palindrome h)

#eval [1, 2, 1].isPalindrome

#eval [1, 2, 3, 1].isPalindrome

example : [1, 2, 1].isPalindrome := rfl

example : [1, 2, 2, 1].isPalindrome := rfl

example : ![1, 2, 3, 1].isPalindrome := rfl

(this example is rendered by Alectryon in the CI)

/-!

# Binary Search Trees

If the type of keys can be totally ordered -- that is, it supports a well-behaved `≤` comparison --

then maps can be implemented with binary search trees (BSTs). Insert and lookup operations on BSTs take time

proportional to the height of the tree. If the tree is balanced, the operations therefore take logarithmic time.

This example is based on a similar example found in the ["Software Foundations"](https://softwarefoundations.cis.upenn.edu/vfa-current/SearchTree.html)

book (volume 3).

-/

/-!

We use `Nat` as the key type in our implementation of BSTs,

since it has a convenient total order with lots of theorems and automation available.

We leave as an exercise to the reader the generalization to arbitrary types.

-/

inductive Tree (β : Type v) where

| leaf

| node (left : Tree β) (key : Nat) (value : β) (right : Tree β)

deriving Repr

/-!

The function `contains` returns `true` iff the given tree contains the key `k`.

-/

def Tree.contains (t : Tree β) (k : Nat) : Bool :=

match t with

| leaf => false

| node left key value right =>

if k < key then

left.contains k

else if key < k then

right.contains k

else

true

/-!

`t.find? k` returns `some v` if `v` is the value bound to key `k` in the tree `t`. It returns `none` otherwise.

-/

def Tree.find? (t : Tree β) (k : Nat) : Option β :=

match t with

| leaf => none

| node left key value right =>

if k < key then

left.find? k

else if key < k then

right.find? k

else

some value

/-!

`t.insert k v` is the map containing all the bindings of `t` along with a binding of `k` to `v`.

-/

def Tree.insert (t : Tree β) (k : Nat) (v : β) : Tree β :=

match t with

| leaf => node leaf k v leaf

| node left key value right =>

if k < key then

node (left.insert k v) key value right

else if key < k then

node left key value (right.insert k v)

else

node left k v right

/-!

Let's add a new operation to our tree: converting it to an association list that contains the key--value bindings from the tree stored as pairs.

If that list is sorted by the keys, then any two trees that represent the same map would be converted to the same list.

Here's a function that does so with an in-order traversal of the tree.

-/

def Tree.toList (t : Tree β) : List (Nat × β) :=

match t with

| leaf => []

| node l k v r => l.toList ++ [(k, v)] ++ r.toList

#eval Tree.leaf.insert 2 "two"

|>.insert 3 "three"

|>.insert 1 "one"

#eval Tree.leaf.insert 2 "two"

|>.insert 3 "three"

|>.insert 1 "one"

|>.toList

/-!

The implementation of `Tree.toList` is inefficient because of how it uses the `++` operator.

On a balanced tree its running time is linearithmic, because it does a linear number of

concatenations at each level of the tree. On an unbalanced tree it's quadratic time.

Here's a tail-recursive implementation than runs in linear time, regardless of whether the tree is balanced:

-/

def Tree.toListTR (t : Tree β) : List (Nat × β) :=

go t []

where

go (t : Tree β) (acc : List (Nat × β)) : List (Nat × β) :=

match t with

| leaf => acc

| node l k v r => go l ((k, v) :: go r acc)

/-!

We now prove that `t.toList` and `t.toListTR` return the same list.

The proof is on induction, and as we used the auxiliary function `go`

to define `Tree.toListTR`, we use the auxiliary theorem `go` to prove the theorem.

The proof of the auxiliary theorem is by induction on `t`.

The `generalizing acc` modifier instructs Lean to revert `acc`, apply the

induction theorem for `Tree`s, and then reintroduce `acc` in each case.

By using `generalizing`, we obtain the more general induction hypotheses

- `left_ih : ∀ acc, toListTR.go left acc = toList left ++ acc`

- `right_ih : ∀ acc, toListTR.go right acc = toList right ++ acc`

Recall that the combinator `tac <;> tac'` runs `tac` on the main goal and `tac'` on each produced goal,

concatenating all goals produced by `tac'`. In this theorem, we use it to apply

`simp` and close each subgoal produced by the `induction` tactic.

The `simp` parameters `toListTR.go` and `toList` instruct the simplifier to try to reduce

and/or apply auto generated equation theorems for these two functions.

The parameter `*` instructs the simplifier to use any equation in a goal as rewriting rules.

In this particular case, `simp` uses the induction hypotheses as rewriting rules.

Finally, the parameter `List.append_assoc` instructs the simplifier to use the

`List.append_assoc` theorem as a rewriting rule.

-/

theorem Tree.toList_eq_toListTR (t : Tree β)

: t.toList = t.toListTR := by

simp [toListTR, go t []]

where

go (t : Tree β) (acc : List (Nat × β))

: toListTR.go t acc = t.toList ++ acc := by

induction t generalizing acc <;>

simp [toListTR.go, toList, *, List.append_assoc]

/-!

The `[csimp]` annotation instructs the Lean code generator to replace

any `Tree.toList` with `Tree.toListTR` when generating code.

-/

@[csimp] theorem Tree.toList_eq_toListTR_csimp

: @Tree.toList = @Tree.toListTR := by

funext β t

apply toList_eq_toListTR

/-!

The implementations of `Tree.find?` and `Tree.insert` assume that values of type tree obey the BST invariant:

for any non-empty node with key `k`, all the values of the `left` subtree are less than `k` and all the values

of the right subtree are greater than `k`. But that invariant is not part of the definition of tree.

So, let's formalize the BST invariant. Here's one way to do so. First, we define a helper `ForallTree`

to express that idea that a predicate holds at every node of a tree:

-/

inductive ForallTree (p : Nat → β → Prop) : Tree β → Prop

| leaf : ForallTree p .leaf

| node :

ForallTree p left →

p key value →

ForallTree p right →

ForallTree p (.node left key value right)

/-!

Second, we define the BST invariant:

An empty tree is a BST.

A non-empty tree is a BST if all its left nodes have a lesser key, its right nodes have a greater key, and the left and right subtrees are themselves BSTs.

-/

inductive BST : Tree β → Prop

| leaf : BST .leaf

| node :

ForallTree (fun k v => k < key) left →

ForallTree (fun k v => key < k) right →

BST left → BST right →

BST (.node left key value right)

/-!

We can use the `macro` command to create helper tactics for organizing our proofs.

The macro `have_eq x y` tries to prove `x = y` using linear arithmetic, and then

immediately uses the new equality to substitute `x` with `y` everywhere in the goal.

The modifier `local` specifies the scope of the macro.

-/

/-- The `have_eq lhs rhs` tactic (tries to) prove that `lhs = rhs`,

and then replaces `lhs` with `rhs`. -/

local macro "have_eq " lhs:term:max rhs:term:max : tactic =>

`(tactic|

(have h : $lhs = $rhs :=

-- TODO: replace with linarith

by simp_arith at *; apply Nat.le_antisymm <;> assumption

try subst $lhs))

/-!

The `by_cases' e` is just the regular `by_cases` followed by `simp` using all

hypotheses in the current goal as rewriting rules.

Recall that the `by_cases` tactic creates two goals. One where we have `h : e` and

another one containing `h : ¬ e`. The simplifier uses the `h` to rewrite `e` to `True`

in the first subgoal, and `e` to `False` in the second. This is particularly

useful if `e` is the condition of an `if`-statement.

-/

/-- `by_cases' e` is a shorthand form `by_cases e <;> simp[*]` -/

local macro "by_cases' " e:term : tactic =>

`(tactic| by_cases $e <;> simp [*])

/-!

We can use the attribute `[simp]` to instruct the simplifier to reduce given definitions or

apply rewrite theorems. The `local` modifier limits the scope of this modification to this file.

-/

attribute [local simp] Tree.insert

/-!

We now prove that `Tree.insert` preserves the BST invariant using induction and case analysis.

Recall that the tactic `. tac` focuses on the main goal and tries to solve it using `tac`, or else fails.

It is used to structure proofs in Lean.

The notation `‹e›` is just syntax sugar for `(by assumption : e)`. That is, it tries to find a hypothesis `h : e`.

It is useful to access hypothesis that have auto generated names (aka "inaccessible") names.

-/

theorem Tree.forall_insert_of_forall

(h₁ : ForallTree p t) (h₂ : p key value)

: ForallTree p (t.insert key value) := by

induction h₁ with

| leaf => exact .node .leaf h₂ .leaf

| node hl hp hr ihl ihr =>

rename Nat => k

by_cases' key < k

. exact .node ihl hp hr

. by_cases' k < key

. exact .node hl hp ihr

. have_eq key k

exact .node hl h₂ hr

theorem Tree.bst_insert_of_bst

{t : Tree β} (h : BST t) (key : Nat) (value : β)

: BST (t.insert key value) := by

induction h with

| leaf => exact .node .leaf .leaf .leaf .leaf

| node h₁ h₂ b₁ b₂ ih₁ ih₂ =>

rename Nat => k

simp

by_cases' key < k

. exact .node (forall_insert_of_forall h₁ ‹key < k›) h₂ ih₁ b₂

. by_cases' k < key

. exact .node h₁ (forall_insert_of_forall h₂ ‹k < key›) b₁ ih₂

. have_eq key k

exact .node h₁ h₂ b₁ b₂

/-!

Now, we define the type `BinTree` using a `Subtype` that states that only trees satisfying the BST invariant are `BinTree`s.

-/

def BinTree (β : Type u) := { t : Tree β // BST t }

def BinTree.mk : BinTree β :=

⟨.leaf, .leaf⟩

def BinTree.contains (b : BinTree β) (k : Nat) : Bool :=

b.val.contains k

def BinTree.find? (b : BinTree β) (k : Nat) : Option β :=

b.val.find? k

def BinTree.insert (b : BinTree β) (k : Nat) (v : β) : BinTree β :=

⟨b.val.insert k v, b.val.bst_insert_of_bst b.property k v⟩

/-!

Finally, we prove that `BinTree.find?` and `BinTree.insert` satisfy the map properties.

-/

attribute [local simp]

BinTree.mk BinTree.contains BinTree.find?

BinTree.insert Tree.find? Tree.contains Tree.insert

theorem BinTree.find_mk (k : Nat)

: BinTree.mk.find? k = (none : Option β) := by

simp

theorem BinTree.find_insert (b : BinTree β) (k : Nat) (v : β)

: (b.insert k v).find? k = some v := by

let ⟨t, h⟩ := b; simp

induction t with simp

| node left key value right ihl ihr =>

by_cases' k < key

. cases h; apply ihl; assumption

. by_cases' key < k

cases h; apply ihr; assumption

theorem BinTree.find_insert_of_ne (b : BinTree β) (ne : k ≠ k') (v : β)

: (b.insert k v).find? k' = b.find? k' := by

let ⟨t, h⟩ := b; simp

induction t with simp

| leaf =>

intros le

exact Nat.lt_of_le_of_ne le ne

| node left key value right ihl ihr =>

let .node hl hr bl br := h

specialize ihl bl

specialize ihr br

by_cases' k < key; by_cases' key < k

have_eq key k

by_cases' k' < k; by_cases' k < k'

have_eq k k'

contradiction

(this example is rendered by Alectryon in the CI)

/-!

# A Certified Type Checker

In this example, we build a certified type checker for a simple expression

language.

Remark: this example is based on an example in the book [Certified Programming with Dependent Types](http://adam.chlipala.net/cpdt/) by Adam Chlipala.

-/

inductive Expr where

| nat : Nat → Expr

| plus : Expr → Expr → Expr

| bool : Bool → Expr

| and : Expr → Expr → Expr

/-!

We define a simple language of types using the inductive datatype `Ty`, and

its typing rules using the inductive predicate `HasType`.

-/

inductive Ty where

| nat

| bool

deriving DecidableEq

inductive HasType : Expr → Ty → Prop

| nat : HasType (.nat v) .nat

| plus : HasType a .nat → HasType b .nat → HasType (.plus a b) .nat

| bool : HasType (.bool v) .bool

| and : HasType a .bool → HasType b .bool → HasType (.and a b) .bool

/-!

We can easily show that if `e` has type `t₁` and type `t₂`, then `t₁` and `t₂` must be equal

by using the the `cases` tactic. This tactic creates a new subgoal for every constructor,

and automatically discharges unreachable cases. The tactic combinator `tac₁ <;> tac₂` applies

`tac₂` to each subgoal produced by `tac₁`. Then, the tactic `rfl` is used to close all produced

goals using reflexivity.

-/

theorem HasType.det (h₁ : HasType e t₁) (h₂ : HasType e t₂) : t₁ = t₂ := by

cases h₁ <;> cases h₂ <;> rfl

/-!

The inductive type `Maybe p` has two constructors: `found a h` and `unknown`.

The former contains an element `a : α` and a proof that `a` satisfies the predicate `p`.

The constructor `unknown` is used to encode "failure".

-/

inductive Maybe (p : α → Prop) where

| found : (a : α) → p a → Maybe p

| unknown

/-!

We define a notation for `Maybe` that is similar to the builtin notation for the Lean builtin type `Subtype`.

-/

notation "{{ " x " | " p " }}" => Maybe (fun x => p)

/-!

The function `Expr.typeCheck e` returns a type `ty` and a proof that `e` has type `ty`,

or `unknown`.

Recall that, `def Expr.typeCheck ...` in Lean is notation for `namespace Expr def typeCheck ... end Expr`.

The term `.found .nat .nat` is sugar for `Maybe.found Ty.nat HasType.nat`. Lean can infer the namespaces using

the expected types.

-/

def Expr.typeCheck (e : Expr) : {{ ty | HasType e ty }} :=

match e with

| nat .. => .found .nat .nat

| bool .. => .found .bool .bool

| plus a b =>

match a.typeCheck, b.typeCheck with

| .found .nat h₁, .found .nat h₂ => .found .nat (.plus h₁ h₂)

| _, _ => .unknown

| and a b =>

match a.typeCheck, b.typeCheck with

| .found .bool h₁, .found .bool h₂ => .found .bool (.and h₁ h₂)

| _, _ => .unknown

theorem Expr.typeCheck_correct (h₁ : HasType e ty) (h₂ : e.typeCheck ≠ .unknown)

: e.typeCheck = .found ty h := by

revert h₂

cases typeCheck e with

| found ty' h' => intro; have := HasType.det h₁ h'; subst this; rfl

| unknown => intros; contradiction

/-!

Now, we prove that if `Expr.typeCheck e` returns `Maybe.unknown`, then forall `ty`, `HasType e ty` does not hold.

The notation `e.typeCheck` is sugar for `Expr.typeCheck e`. Lean can infer this because we explicitly said that `e` has type `Expr`.

The proof is by induction on `e` and case analysis. The tactic `rename_i` is used to to rename "inaccessible" variables.

We say a variable is inaccessible if it is introduced by a tactic (e.g., `cases`) or has been shadowed by another variable introduced

by the user. Note that the tactic `simp [typeCheck]` is applied to all goal generated by the `induction` tactic, and closes

the cases corresponding to the constructors `Expr.nat` and `Expr.bool`.

-/

theorem Expr.typeCheck_complete {e : Expr} : e.typeCheck = .unknown → ¬ HasType e ty := by

induction e with simp [typeCheck]

| plus a b iha ihb =>

split

next => intros; contradiction

next ra rb hnp =>

-- Recall that `hnp` is a hypothesis generated by the `split` tactic

-- that asserts the previous case was not taken

intro h ht

cases ht with

| plus h₁ h₂ => exact hnp h₁ h₂ (typeCheck_correct h₁ (iha · h₁)) (typeCheck_correct h₂ (ihb · h₂))

| and a b iha ihb =>

split

next => intros; contradiction

next ra rb hnp =>

intro h ht

cases ht with

| and h₁ h₂ => exact hnp h₁ h₂ (typeCheck_correct h₁ (iha · h₁)) (typeCheck_correct h₂ (ihb · h₂))

/-!

Finally, we show that type checking for `e` can be decided using `Expr.typeCheck`.

-/

instance (e : Expr) (t : Ty) : Decidable (HasType e t) :=

match h' : e.typeCheck with

| .found t' ht' =>

if heq : t = t' then

isTrue (heq ▸ ht')

else

isFalse fun ht => heq (HasType.det ht ht')

| .unknown => isFalse (Expr.typeCheck_complete h')

(this example is rendered by Alectryon in the CI)

/-!

# The Well-Typed Interpreter

In this example, we build an interpreter for a simple functional programming language,

with variables, function application, binary operators and an `if...then...else` construct.

We will use the dependent type system to ensure that any programs which can be represented are well-typed.

Remark: this example is based on an example found in the Idris manual.

-/

/-!

Vectors

--------

A `Vector` is a list of size `n` whose elements belong to a type `α`.

-/

inductive Vector (α : Type u) : Nat → Type u

| nil : Vector α 0

| cons : α → Vector α n → Vector α (n+1)

/-!

We can overload the `List.cons` notation `::` and use it to create `Vector`s.

-/

infix:67 " :: " => Vector.cons

/-!

Now, we define the types of our simple functional language.

We have integers, booleans, and functions, represented by `Ty`.

-/

inductive Ty where

| int

| bool

| fn (a r : Ty)

/-!

We can write a function to translate `Ty` values to a Lean type

— remember that types are first class, so can be calculated just like any other value.

We mark `Ty.interp` as `[reducible]` to make sure the typeclass resolution procedure can

unfold/reduce it. For example, suppose Lean is trying to synthesize a value for the instance

`Add (Ty.interp Ty.int)`. Since `Ty.interp` is marked as `[reducible]`,

the typeclass resolution procedure can reduce `Ty.interp Ty.int` to `Int`, and use

the builtin instance for `Add Int` as the solution.

-/

@[reducible] def Ty.interp : Ty → Type

| int => Int

| bool => Bool

| fn a r => a.interp → r.interp

/-!

Expressions are indexed by the types of the local variables, and the type of the expression itself.

-/

inductive HasType : Fin n → Vector Ty n → Ty → Type where

| stop : HasType 0 (ty :: ctx) ty

| pop : HasType k ctx ty → HasType k.succ (u :: ctx) ty

inductive Expr : Vector Ty n → Ty → Type where

| var : HasType i ctx ty → Expr ctx ty

| val : Int → Expr ctx Ty.int

| lam : Expr (a :: ctx) ty → Expr ctx (Ty.fn a ty)

| app : Expr ctx (Ty.fn a ty) → Expr ctx a → Expr ctx ty

| op : (a.interp → b.interp → c.interp) → Expr ctx a → Expr ctx b → Expr ctx c

| ife : Expr ctx Ty.bool → Expr ctx a → Expr ctx a → Expr ctx a

| delay : (Unit → Expr ctx a) → Expr ctx a

/-!

We use the command `open` to create the aliases `stop` and `pop` for `HasType.stop` and `HasType.pop` respectively.

-/

open HasType (stop pop)

/-!

Since expressions are indexed by their type, we can read the typing rules of the language from the definitions of the constructors.

Let us look at each constructor in turn.

We use a nameless representation for variables — they are de Bruijn indexed.

Variables are represented by a proof of their membership in the context, `HasType i ctx ty`,

which is a proof that variable `i` in context `ctx` has type `ty`.

We can treat `stop` as a proof that the most recently defined variable is well-typed,

and `pop n` as a proof that, if the `n`th most recently defined variable is well-typed, so is the `n+1`th.

In practice, this means we use `stop` to refer to the most recently defined variable,

`pop stop` to refer to the next, and so on, via the `Expr.var` constructor.

A value `Expr.val` carries a concrete representation of an integer.

A lambda `Expr.lam` creates a function. In the scope of a function of type `Ty.fn a ty`, there is a

new local variable of type `a`.

A function application `Expr.app` produces a value of type `ty` given a function from `a` to `ty` and a value of type `a`.

The constructor `Expr.op` allows us to use arbitrary binary operators, where the type of the operator informs what the types of the arguments must be.

Finally, the constructor `Exp.ife` represents a `if-then-else` expression. The condition is a Boolean, and each branch must have the same type.

The auxiliary constructor `Expr.delay` is used to delay evaluation.

-/

/-!

When we evaluate an `Expr`, we’ll need to know the values in scope, as well as their types. `Env` is an environment,

indexed over the types in scope. Since an environment is just another form of list, albeit with a strongly specified connection

to the vector of local variable types, we overload again the notation `::` so that we can use the usual list syntax.

Given a proof that a variable is defined in the context, we can then produce a value from the environment.

-/

inductive Env : Vector Ty n → Type where

| nil : Env Vector.nil

| cons : Ty.interp a → Env ctx → Env (a :: ctx)

infix:67 " :: " => Env.cons

def Env.lookup : HasType i ctx ty → Env ctx → ty.interp

| stop, x :: xs => x

| pop k, x :: xs => lookup k xs

/-!

Given this, an interpreter is a function which translates an `Expr` into a Lean value with respect to a specific environment.

-/

def Expr.interp (env : Env ctx) : Expr ctx ty → ty.interp

| var i => env.lookup i

| val x => x

| lam b => fun x => b.interp (Env.cons x env)

| app f a => f.interp env (a.interp env)

| op o x y => o (x.interp env) (y.interp env)

| ife c t e => if c.interp env then t.interp env else e.interp env

| delay a => (a ()).interp env

open Expr

/-!

We can make some simple test functions. Firstly, adding two inputs `fun x y => y + x` is written as follows.

-/

def add : Expr ctx (Ty.fn Ty.int (Ty.fn Ty.int Ty.int)) :=

lam (lam (op (·+·) (var stop) (var (pop stop))))

#eval add.interp Env.nil 10 20

/-!

More interestingly, a factorial function fact (e.g. `fun x => if (x == 0) then 1 else (fact (x-1) * x)`), can be written as.

Note that this is a recursive (non-terminating) definition. For every input value, the interpreter terminates, but the

definition itself is non-terminating. We use two tricks to make sure Lean accepts it. First, we use the auxiliary constructor

`Expr.delay` to delay its unfolding. Second, we add the annotation `decreasing_by sorry` which can be viewed as

"trust me, this recursive definition makes sense". Recall that `sorry` is an unsound axiom in Lean.

-/

def fact : Expr ctx (Ty.fn Ty.int Ty.int) :=

lam (ife (op (·==·) (var stop) (val 0))

(val 1)

(op (·*·) (delay fun _ => app fact (op (·-·) (var stop) (val 1))) (var stop)))

decreasing_by sorry

#eval fact.interp Env.nil 10

(this example is rendered by Alectryon in the CI)

/-!

# Dependent de Bruijn Indices

In this example, we represent program syntax terms in a type family parameterized by a list of types,

representing the typing context, or information on which free variables are in scope and what

their types are.

Remark: this example is based on an example in the book [Certified Programming with Dependent Types](http://adam.chlipala.net/cpdt/) by Adam Chlipala.

-/

/-!

Programmers who move to statically typed functional languages from scripting languages

often complain about the requirement that every element of a list have the same type. With

fancy type systems, we can partially lift this requirement. We can index a list type with a

“type-level” list that explains what type each element of the list should have. This has been

done in a variety of ways in Haskell using type classes, and we can do it much more cleanly

and directly in Lean.

We parameterize our heterogeneous lists by at type `α` and an `α`-indexed type `β`.

-/

inductive HList {α : Type v} (β : α → Type u) : List α → Type (max u v)

| nil : HList β []

| cons : β i → HList β is → HList β (i::is)

/-!

We overload the `List.cons` notation `::` so we can also use it to create

heterogeneous lists.

-/

infix:67 " :: " => HList.cons

/-! We similarly overload the `List` notation `[]` for the empty heterogeneous list. -/

notation "[" "]" => HList.nil

/-!

Variables are represented in a way isomorphic to the natural numbers, where

number 0 represents the first element in the context, number 1 the second element, and so

on. Actually, instead of numbers, we use the `Member` inductive family.

The value of type `Member a as` can be viewed as a certificate that `a` is

an element of the list `as`. The constructor `Member.head` says that `a`

is in the list if the list begins with it. The constructor `Member.tail`

says that if `a` is in the list `bs`, it is also in the list `b::bs`.

-/

inductive Member : α → List α → Type

| head : Member a (a::as)

| tail : Member a bs → Member a (b::bs)

/-!

Given a heterogeneous list `HList β is` and value of type `Member i is`, `HList.get`

retrieves an element of type `β i` from the list.

The pattern `.head` and `.tail h` are sugar for `Member.head` and `Member.tail h` respectively.

Lean can infer the namespace using the expected type.

-/

def HList.get : HList β is → Member i is → β i

| a::as, .head => a

| a::as, .tail h => as.get h

/-!

Here is the definition of the simple type system for our programming language, a simply typed

lambda calculus with natural numbers as the base type.

-/

inductive Ty where

| nat

| fn : Ty → Ty → Ty

/-!

We can write a function to translate `Ty` values to a Lean type

— remember that types are first class, so can be calculated just like any other value.

We mark `Ty.denote` as `[reducible]` to make sure the typeclass resolution procedure can

unfold/reduce it. For example, suppose Lean is trying to synthesize a value for the instance

`Add (Ty.denote Ty.nat)`. Since `Ty.denote` is marked as `[reducible]`,

the typeclass resolution procedure can reduce `Ty.denote Ty.nat` to `Nat`, and use

the builtin instance for `Add Nat` as the solution.

Recall that the term `a.denote` is sugar for `denote a` where `denote` is the function being defined.

We call it the "dot notation".

-/

@[reducible] def Ty.denote : Ty → Type

| nat => Nat

| fn a b => a.denote → b.denote

/-!

Here is the definition of the `Term` type, including variables, constants, addition,

function application and abstraction, and let binding of local variables.

Since `let` is a keyword in Lean, we use the "escaped identifier" `«let»`.

You can input the unicode (French double quotes) using `\f<<` (for `«`) and `\f>>` (for `»`).

The term `Term ctx .nat` is sugar for `Term ctx Ty.nat`, Lean infers the namespace using the expected type.

-/

inductive Term : List Ty → Ty → Type

| var : Member ty ctx → Term ctx ty

| const : Nat → Term ctx .nat

| plus : Term ctx .nat → Term ctx .nat → Term ctx .nat

| app : Term ctx (.fn dom ran) → Term ctx dom → Term ctx ran

| lam : Term (dom :: ctx) ran → Term ctx (.fn dom ran)

| «let» : Term ctx ty₁ → Term (ty₁ :: ctx) ty₂ → Term ctx ty₂

/-!

Here are two example terms encoding, the first addition packaged as a two-argument

curried function, and the second of a sample application of addition to constants.

The command `open Ty Term Member` opens the namespaces `Ty`, `Term`, and `Member`. Thus,

you can write `lam` instead of `Term.lam`.

-/

open Ty Term Member

def add : Term [] (fn nat (fn nat nat)) :=

lam (lam (plus (var (tail head)) (var head)))

def three_the_hard_way : Term [] nat :=

app (app add (const 1)) (const 2)

/-!

Since dependent typing ensures that any term is well-formed in its context and has a particular type,

it is easy to translate syntactic terms into Lean values.

The attribute `[simp]` instructs Lean to always try to unfold `Term.denote` applications when one applies

the `simp` tactic. We also say this is a hint for the Lean term simplifier.

-/

@[simp] def Term.denote : Term ctx ty → HList Ty.denote ctx → ty.denote

| var h, env => env.get h

| const n, _ => n

| plus a b, env => a.denote env + b.denote env

| app f a, env => f.denote env (a.denote env)

| lam b, env => fun x => b.denote (x :: env)

| «let» a b, env => b.denote (a.denote env :: env)

/-!

You can show that the denotation of `three_the_hard_way` is indeed `3` using reflexivity.

-/

example : three_the_hard_way.denote [] = 3 :=

rfl

/-!

We now define the constant folding optimization that traverses a term if replaces subterms such as

`plus (const m) (const n)` with `const (n+m)`.

-/

@[simp] def Term.constFold : Term ctx ty → Term ctx ty

| const n => const n

| var h => var h

| app f a => app f.constFold a.constFold

| lam b => lam b.constFold

| «let» a b => «let» a.constFold b.constFold

| plus a b =>

match a.constFold, b.constFold with

| const n, const m => const (n+m)

| a', b' => plus a' b'

/-!

The correctness of the `Term.constFold` is proved using induction, case-analysis, and the term simplifier.

We prove all cases but the one for `plus` using `simp [*]`. This tactic instructs the term simplifier to

use hypotheses such as `a = b` as rewriting/simplications rules.

We use the `split` to break the nested `match` expression in the `plus` case into two cases.

The local variables `iha` and `ihb` are the induction hypotheses for `a` and `b`.

The modifier `←` in a term simplifier argument instructs the term simplifier to use the equation as a rewriting rule in

the "reverse direction". That is, given `h : a = b`, `← h` instructs the term simplifier to rewrite `b` subterms to `a`.

-/

theorem Term.constFold_sound (e : Term ctx ty) : e.constFold.denote env = e.denote env := by

induction e with simp [*]

| plus a b iha ihb =>

split

next he₁ he₂ => simp [← iha, ← ihb, he₁, he₂]

next => simp [iha, ihb]

(this example is rendered by Alectryon in the CI)

/-!

# Parametric Higher-Order Abstract Syntax

In contrast to first-order encodings, higher-order encodings avoid explicit modeling of variable identity.

Instead, the binding constructs of an object language (the language being

formalized) can be represented using the binding constructs of the meta language (the language in which the formalization is done).

The best known higher-order encoding is called higher-order abstract syntax (HOAS),

and we can start by attempting to apply it directly in Lean.

Remark: this example is based on an example in the book [Certified Programming with Dependent Types](http://adam.chlipala.net/cpdt/) by Adam Chlipala.

-/

/-!

Here is the definition of the simple type system for our programming language, a simply typed

lambda calculus with natural numbers as the base type.

-/

inductive Ty where

| nat

| fn : Ty → Ty → Ty

/-!

We can write a function to translate `Ty` values to a Lean type

— remember that types are first class, so can be calculated just like any other value.

We mark `Ty.denote` as `[reducible]` to make sure the typeclass resolution procedure can

unfold/reduce it. For example, suppose Lean is trying to synthesize a value for the instance

`Add (Ty.denote Ty.nat)`. Since `Ty.denote` is marked as `[reducible]`,

the typeclass resolution procedure can reduce `Ty.denote Ty.nat` to `Nat`, and use

the builtin instance for `Add Nat` as the solution.

Recall that the term `a.denote` is sugar for `denote a` where `denote` is the function being defined.

We call it the "dot notation".

-/

@[reducible] def Ty.denote : Ty → Type

| nat => Nat

| fn a b => a.denote → b.denote

/-!

With HOAS, each object language binding construct is represented with a function of

the meta language. Here is what we get if we apply that idea within an inductive definition

of term syntax. However a naive encondig in Lean fails to meet the strict positivity restrictions

imposed by the Lean kernel. An alternate higher-order encoding is parametric HOAS, as introduced by Washburn

and Weirich for Haskell and tweaked by Adam Chlipala for use in Coq. The key idea is to parameterize the

declaration by a type family `rep` standing for a "representation of variables."

-/

inductive Term' (rep : Ty → Type) : Ty → Type

| var : rep ty → Term' rep ty

| const : Nat → Term' rep .nat

| plus : Term' rep .nat → Term' rep .nat → Term' rep .nat

| lam : (rep dom → Term' rep ran) → Term' rep (.fn dom ran)

| app : Term' rep (.fn dom ran) → Term' rep dom → Term' rep ran

| let : Term' rep ty₁ → (rep ty₁ → Term' rep ty₂) → Term' rep ty₂

/-!

Lean accepts this definition because our embedded functions now merely take variables as

arguments, instead of arbitrary terms. One might wonder whether there is an easy loophole

to exploit here, instantiating the parameter `rep` as term itself. However, to do that, we

would need to choose a variable representation for this nested mention of term, and so on

through an infinite descent into term arguments.

We write the final type of a closed term using polymorphic quantification over all possible

choices of `rep` type family

-/

open Ty (nat fn)

namespace FirstTry

def Term (ty : Ty) := (rep : Ty → Type) → Term' rep ty

/-!

In the next two example, note how each is written as a function over a `rep` choice,

such that the specific choice has no impact on the structure of the term.

-/

def add : Term (fn nat (fn nat nat)) := fun _rep =>

.lam fun x => .lam fun y => .plus (.var x) (.var y)

def three_the_hard_way : Term nat := fun rep =>

.app (.app (add rep) (.const 1)) (.const 2)

end FirstTry

/-!

The argument `rep` does not even appear in the function body for `add`. How can that be?

By giving our terms expressive types, we allow Lean to infer many arguments for us. In fact,

we do not even need to name the `rep` argument! By using Lean implicit arguments and lambdas,

we can completely hide `rep` in these examples.

-/

def Term (ty : Ty) := {rep : Ty → Type} → Term' rep ty

def add : Term (fn nat (fn nat nat)) :=

.lam fun x => .lam fun y => .plus (.var x) (.var y)

def three_the_hard_way : Term nat :=

.app (.app add (.const 1)) (.const 2)

/-!

It may not be at all obvious that the PHOAS representation admits the crucial computable

operations. The key to effective deconstruction of PHOAS terms is one principle: treat

the `rep` parameter as an unconstrained choice of which data should be annotated on each

variable. We will begin with a simple example, that of counting how many variable nodes

appear in a PHOAS term. This operation requires no data annotated on variables, so we

simply annotate variables with `Unit` values. Note that, when we go under binders in the

cases for `lam` and `let`, we must provide the data value to annotate on the new variable we

pass beneath. For our current choice of `Unit` data, we always pass `()`.

-/

def countVars : Term' (fun _ => Unit) ty → Nat

| .var _ => 1

| .const _ => 0

| .plus a b => countVars a + countVars b

| .app f a => countVars f + countVars a

| .lam b => countVars (b ())

| .let a b => countVars a + countVars (b ())

/-! We can now easily prove that `add` has two variables by using reflexivity -/

example : countVars add = 2 :=

rfl

/-!

Here is another example, translating PHOAS terms into strings giving a first-order rendering.

To implement this translation, the key insight is to tag variables with strings, giving their names.

The function takes as an additional input `i` which is used to create variable names for binders.

We also use the string interpolation available in Lean. For example, `s!"x_{i}"` is expanded to

`"x_" ++ toString i`.

-/

def pretty (e : Term' (fun _ => String) ty) (i : Nat := 1) : String :=

match e with

| .var s => s

| .const n => toString n

| .app f a => s!"({pretty f i} {pretty a i})"

| .plus a b => s!"({pretty a i} + {pretty b i})"

| .lam f =>

let x := s!"x_{i}"

s!"(fun {x} => {pretty (f x) (i+1)})"

| .let a b =>

let x := s!"x_{i}"

s!"(let {x} := {pretty a i}; => {pretty (b x) (i+1)}"

#eval pretty three_the_hard_way

/-!

It is not necessary to convert to a different representation to support many common

operations on terms. For instance, we can implement substitution of terms for variables.

The key insight here is to tag variables with terms, so that, on encountering a variable, we

can simply replace it by the term in its tag. We will call this function initially on a term

with exactly one free variable, tagged with the appropriate substitute. During recursion,

new variables are added, but they are only tagged with their own term equivalents. Note

that this function squash is parameterized over a specific `rep` choice.

-/

def squash : Term' (Term' rep) ty → Term' rep ty

| .var e => e

| .const n => .const n

| .plus a b => .plus (squash a) (squash b)

| .lam f => .lam fun x => squash (f (.var x))

| .app f a => .app (squash f) (squash a)

| .let a b => .let (squash a) fun x => squash (b (.var x))

/-!

To define the final substitution function over terms with single free variables, we define

`Term1`, an analogue to Term that we defined before for closed terms.

-/

def Term1 (ty1 ty2 : Ty) := {rep : Ty → Type} → rep ty1 → Term' rep ty2

/-!

Substitution is defined by (1) instantiating a `Term1` to tag variables with terms and (2)

applying the result to a specific term to be substituted. Note how the parameter `rep` of

`squash` is instantiated: the body of `subst` is itself a polymorphic quantification over `rep`,

standing for a variable tag choice in the output term; and we use that input to compute a

tag choice for the input term.

-/

def subst (e : Term1 ty1 ty2) (e' : Term ty1) : Term ty2 :=

squash (e e')

/-!

We can view `Term1` as a term with hole. In the following example,

`(fun x => plus (var x) (const 5))` can be viewed as the term `plus _ (const 5)` where

the hole `_` is instantiated by `subst` with `three_the_hard_way`

-/

#eval pretty <| subst (fun x => .plus (.var x) (.const 5)) three_the_hard_way

/-!

One further development, which may seem surprising at first,

is that we can also implement a usual term denotation function,

when we tag variables with their denotations.

The attribute `[simp]` instructs Lean to always try to unfold `denote` applications when one applies

the `simp` tactic. We also say this is a hint for the Lean term simplifier.

-/

@[simp] def denote : Term' Ty.denote ty → ty.denote

| .var x => x

| .const n => n

| .plus a b => denote a + denote b

| .app f a => denote f (denote a)

| .lam f => fun x => denote (f x)

| .let a b => denote (b (denote a))

example : denote three_the_hard_way = 3 :=

rfl

/-!

To summarize, the PHOAS representation has all the expressive power of more

standard encodings (e.g., using de Bruijn indices), and a variety of translations are actually much more pleasant to

implement than usual, thanks to the novel ability to tag variables with data.

-/

/-!

We now define the constant folding optimization that traverses a term if replaces subterms such as

`plus (const m) (const n)` with `const (n+m)`.

-/

@[simp] def constFold : Term' rep ty → Term' rep ty

| .var x => .var x

| .const n => .const n

| .app f a => .app (constFold f) (constFold a)

| .lam f => .lam fun x => constFold (f x)

| .let a b => .let (constFold a) fun x => constFold (b x)

| .plus a b =>

match constFold a, constFold b with

| .const n, .const m => .const (n+m)

| a', b' => .plus a' b'

/-!

The correctness of the `constFold` is proved using induction, case-analysis, and the term simplifier.

We prove all cases but the one for `plus` using `simp [*]`. This tactic instructs the term simplifier to

use hypotheses such as `a = b` as rewriting/simplications rules.

We use the `split` to break the nested `match` expression in the `plus` case into two cases.

The local variables `iha` and `ihb` are the induction hypotheses for `a` and `b`.

The modifier `←` in a term simplifier argument instructs the term simplifier to use the equation as a rewriting rule in

the "reverse direction. That is, given `h : a = b`, `← h` instructs the term simplifier to rewrite `b` subterms to `a`.

-/

theorem constFold_sound (e : Term' Ty.denote ty) : denote (constFold e) = denote e := by

induction e with simp [*]

| plus a b iha ihb =>

split

next he₁ he₂ => simp [← iha, ← ihb, he₁, he₂]

next => simp [iha, ihb]

Organizational features

In this section we introduce some organizational features of Lean that are not a part of its kernel per se, but make it possible to work in the framework more efficiently.

Variables and Sections

Consider the following three function definitions:

def compose (α β γ : Type) (g : β → γ) (f : α → β) (x : α) : γ :=

g (f x)

def doTwice (α : Type) (h : α → α) (x : α) : α :=

h (h x)

def doThrice (α : Type) (h : α → α) (x : α) : α :=

h (h (h x))

Lean provides us with the variable command to make such declarations look more compact:

variable (α β γ : Type)

def compose (g : β → γ) (f : α → β) (x : α) : γ :=

g (f x)

def doTwice (h : α → α) (x : α) : α :=

h (h x)

def doThrice (h : α → α) (x : α) : α :=

h (h (h x))

We can declare variables of any type, not just Type itself:

variable (α β γ : Type)

variable (g : β → γ) (f : α → β) (h : α → α)

variable (x : α)

def compose := g (f x)

def doTwice := h (h x)

def doThrice := h (h (h x))

#print compose

#print doTwice

#print doThrice

Printing them out shows that all three groups of definitions have exactly the same effect.

The variable command instructs Lean to insert the declared variables as bound variables in definitions that refer to them.

Lean is smart enough to figure out which variables are used explicitly or implicitly in a definition. We can therefore proceed as

though α, β, γ, g, f, h, and x are fixed objects when we write our definitions, and let Lean abstract

the definitions for us automatically.

When declared in this way, a variable stays in scope until the end of the file we are working on.

Sometimes, however, it is useful to limit the scope of a variable. For that purpose, Lean provides the notion of a section:

section useful

variable (α β γ : Type)

variable (g : β → γ) (f : α → β) (h : α → α)

variable (x : α)

def compose := g (f x)

def doTwice := h (h x)

def doThrice := h (h (h x))

end useful

When the section is closed, the variables go out of scope, and become nothing more than a distant memory.

You do not have to indent the lines within a section. Nor do you have to name a section, which is to say,

you can use an anonymous section / end pair.

If you do name a section, however, you have to close it using the same name.

Sections can also be nested, which allows you to declare new variables incrementally.

Namespaces

Lean provides us with the ability to group definitions into nested, hierarchical namespaces:

namespace Foo

def a : Nat := 5

def f (x : Nat) : Nat := x + 7

def fa : Nat := f a

def ffa : Nat := f (f a)

#check a

#check f

#check fa

#check ffa

#check Foo.fa

end Foo

-- #check a -- error

-- #check f -- error

#check Foo.a

#check Foo.f

#check Foo.fa

#check Foo.ffa

open Foo

#check a

#check f

#check fa

#check Foo.fa

When we declare that we are working in the namespace Foo, every identifier we declare has

a full name with prefix "Foo." Within the namespace, we can refer to identifiers

by their shorter names, but once we end the namespace, we have to use the longer names.

The open command brings the shorter names into the current context. Often, when we import a

module, we will want to open one or more of the namespaces it contains, to have access to the short identifiers.

But sometimes we will want to leave this information hidden, for example, when they conflict with

identifiers in another namespace we want to use. Thus namespaces give us a way to manage our working environment.

For example, Lean groups definitions and theorems involving lists into a namespace List.

#check List.nil

#check List.cons

#check List.map

We will discuss their types, below. The command open List allows us to use the shorter names:

open List

#check nil

#check cons

#check map

Like sections, namespaces can be nested:

namespace Foo

def a : Nat := 5

def f (x : Nat) : Nat := x + 7

def fa : Nat := f a

namespace Bar

def ffa : Nat := f (f a)

#check fa

#check ffa

end Bar

#check fa

#check Bar.ffa

end Foo

#check Foo.fa

#check Foo.Bar.ffa

open Foo

#check fa

#check Bar.ffa

Namespaces that have been closed can later be reopened, even in another file:

namespace Foo

def a : Nat := 5

def f (x : Nat) : Nat := x + 7

def fa : Nat := f a

end Foo

#check Foo.a

#check Foo.f

namespace Foo

def ffa : Nat := f (f a)

end Foo

Like sections, nested namespaces have to be closed in the order they are opened.

Namespaces and sections serve different purposes: namespaces organize data and sections declare variables for insertion in definitions.

Sections are also useful for delimiting the scope of commands such as set_option and open.

In many respects, however, a namespace ... end block behaves the same as a section ... end block.

In particular, if you use the variable command within a namespace, its scope is limited to the namespace.

Similarly, if you use an open command within a namespace, its effects disappear when the namespace is closed.

Implicit Arguments

Suppose we define the compose function as.

def compose (α β γ : Type) (g : β → γ) (f : α → β) (x : α) : γ :=

g (f x)

The function compose takes three types, α, β, and γ, and two functions, g : β → γ and f : α → β, a value x : α, and

returns g (f x), the composition of g and f.

We say compose is polymorphic over types α, β, and γ. Now, let's use compose:

# def compose (α β γ : Type) (g : β → γ) (f : α → β) (x : α) : γ :=

# g (f x)

def double (x : Nat) := 2*x

def triple (x : Nat) := 3*x

#check compose Nat Nat Nat double triple 10 -- Nat

#eval compose Nat Nat Nat double triple 10 -- 60

def appendWorld (s : String) := s ++ "world"

#check String.length -- String → Nat

#check compose String String Nat String.length appendWorld "hello" -- Nat

#eval compose String String Nat String.length appendWorld "hello" -- 10

Because compose is polymorphic over types α, β, and γ, we have to provide them in the examples above.

But this information is redundant: one can infer the types from the arguments g and f.

This is a central feature of dependent type theory: terms carry a lot of information, and often some of that information can be inferred from the context.

In Lean, one uses an underscore, _, to specify that the system should fill in the information automatically.

# def compose (α β γ : Type) (g : β → γ) (f : α → β) (x : α) : γ :=

# g (f x)

# def double (x : Nat) := 2*x

# def triple (x : Nat) := 3*x

#check compose _ _ _ double triple 10 -- Nat

#eval compose Nat Nat Nat double triple 10 -- 60

# def appendWorld (s : String) := s ++ "world"

# #check String.length -- String → Nat

#check compose _ _ _ String.length appendWorld "hello" -- Nat

#eval compose _ _ _ String.length appendWorld "hello" -- 10

It is still tedious, however, to type all these underscores. When a function takes an argument that can generally be inferred from context, Lean allows us to specify that this argument should, by default, be left implicit. This is done by putting the arguments in curly braces, as follows:

def compose {α β γ : Type} (g : β → γ) (f : α → β) (x : α) : γ :=

g (f x)

# def double (x : Nat) := 2*x

# def triple (x : Nat) := 3*x

#check compose double triple 10 -- Nat

#eval compose double triple 10 -- 60

# def appendWorld (s : String) := s ++ "world"

# #check String.length -- String → Nat

#check compose String.length appendWorld "hello" -- Nat

#eval compose String.length appendWorld "hello" -- 10

All that has changed are the braces around α β γ: Type.

It makes these three arguments implicit. Notationally, this hides the specification of the type,

making it look as though compose simply takes 3 arguments.

Variables can also be specified as implicit when they are declared with

the variable command:

universe u

section

variable {α : Type u}

variable (x : α)

def ident := x

end

variable (α β : Type u)

variable (a : α) (b : β)

#check ident

#check ident a

#check ident b

This definition of ident here has the same effect as the one above.

Lean has very complex mechanisms for instantiating implicit arguments, and we will see that they can be used to infer function types, predicates, and even proofs.

The process of instantiating these "holes," or "placeholders," in a term is part of a bigger process called elaboration.

The presence of implicit arguments means that at times there may be insufficient information to fix the meaning of an expression precisely.

An expression like ident is said to be polymorphic, because it can take on different meanings in different contexts.

One can always specify the type T of an expression e by writing (e : T).

This instructs Lean's elaborator to use the value T as the type of e when trying to elaborate it.

In the following example, this mechanism is used to specify the desired types of the expressions ident.

def ident {α : Type u} (a : α) : α := a

#check (ident : Nat → Nat) -- Nat → Nat

Numerals are overloaded in Lean, but when the type of a numeral cannot be inferred, Lean assumes, by default, that it is a natural number.

So the expressions in the first two #check commands below are elaborated in the same way, whereas the third #check command interprets 2 as an integer.

#check 2 -- Nat

#check (2 : Nat) -- Nat

#check (2 : Int) -- Int

Sometimes, however, we may find ourselves in a situation where we have declared an argument to a function to be implicit,

but now want to provide the argument explicitly. If foo is such a function, the notation @foo denotes the same function with all

the arguments made explicit.

# def ident {α : Type u} (a : α) : α := a

variable (α β : Type)

#check @ident -- {α : Type u} → α → α

#check @ident α -- α → α

#check @ident β -- β → β

#check @ident Nat -- Nat → Nat

#check @ident Bool true -- Bool

Notice that now the first #check command gives the type of the identifier, ident, without inserting any placeholders.

Moreover, the output indicates that the first argument is implicit.

Named arguments enable you to specify an argument for a parameter by matching the argument with its name rather than with its position in the parameter list. You can use them to specify explicit and implicit arguments. If you don't remember the order of the parameters but know their names, you can send the arguments in any order. You may also provide the value for an implicit parameter when Lean failed to infer it. Named arguments also improve the readability of your code by identifying what each argument represents.

# def ident {α : Type u} (a : α) : α := a

#check ident (α := Nat) -- Nat → Nat

#check ident (α := Bool) -- Bool → Bool

Auto Bound Implicit Arguments

In the previous section, we have shown how implicit arguments make functions more convenient to use.

However, functions such as compose are still quite verbose to define. Note that the universe

polymorphic compose is even more verbose than the one previously defined.

universe u v w

def compose {α : Type u} {β : Type v} {γ : Type w}

(g : β → γ) (f : α → β) (x : α) : γ :=

g (f x)

You can avoid the universe command by providing the universe parameters when defining compose.

def compose.{u, v, w}

{α : Type u} {β : Type v} {γ : Type w}

(g : β → γ) (f : α → β) (x : α) : γ :=

g (f x)

Lean 4 supports a new feature called auto bound implicit arguments. It makes functions such as

compose much more convenient to write. When Lean processes the header of a declaration,

any unbound identifier is automatically added as an implicit argument if it is a single lower case or

greek letter. With this feature, we can write compose as

def compose (g : β → γ) (f : α → β) (x : α) : γ :=

g (f x)

#check @compose

-- {β : Sort u_1} → {γ : Sort u_2} → {α : Sort u_3} → (β → γ) → (α → β) → α → γ

Note that, Lean inferred a more general type using Sort instead of Type.

Although we love this feature and use it extensively when implementing Lean,

we realize some users may feel uncomfortable with it. Thus, you can disable it using

the command set_option autoImplicit false.

set_option autoImplicit false

/- The following definition produces `unknown identifier` errors -/

-- def compose (g : β → γ) (f : α → β) (x : α) : γ :=

-- g (f x)

The Lean language server provides semantic highlighting information to editors, and it provides visual feedback whether an identifier has been interpreted as an auto bound implicit argument.

Syntax Extensions

Lean's syntax can be extended and customized

by users at every level, ranging from basic "mixfix" notations

over macro transformers to

type-aware elaborators. In fact, all builtin syntax is parsed and

processed using the same mechanisms and APIs open to users. In this

section, we will describe and explain the various extension points.

Significant syntax extensions already builtin into Lean such as the

do notation are described in subsections.

While introducing new syntax is a relatively rare feature in programming languages and sometimes even frowned upon because of its potential to obscure code, it is an invaluable tool in formalization for expressing established conventions and notations of the respective field succinctly in code. Going beyond basic notations, Lean's ability to factor out common boilerplate code into (well-behaved) macros and to embed entire custom domain specific languages (DSLs) to textually encode subproblems efficiently and readably can be of great benefit to both programmers and proof engineers alike.

The do notation

Lean is a pure functional programming language, but you can write effectful code using the do embedded domain specific language (DSL). The following simple program prints two strings "hello" and "world" in the standard output and terminates with exit code 0. Note that the type of the program is IO UInt32. You can read this type as the type of values that perform input-output effects and produce a value of type UInt32.

def main : IO UInt32 := do

IO.println "hello"

IO.println "world"

return 0

The type of IO.println is String → IO Unit. That is, it is a function from String to IO Unit which indicates it may perform input-output effects and produce a value of type Unit. We often say that functions that may perform effects are methods.

We also say a method application, such as IO.println "hello" is an action.

Note that the examples above also demonstrates that braceless do blocks are whitespace sensitive.

If you like ;s and curly braces, you can write the example above as

def main : IO UInt32 := do {

IO.println "hello";

IO.println "world";

return 0;

}

Semicolons can be used even when curly braces are not used. They are particularly useful when you want to "pack" more than one action in a single line.

def main : IO UInt32 := do

IO.println "hello"; IO.println "world"

return 0

Whitespace sensitivity in programming languages is a controversial topic among programmers. You should use your own style. We, the Lean developers, love the braceless and semicolon-free style. We believe it is clean and beautiful.

The do DSL expands into the core Lean language. Let's inspect the different components using the commands #print and #check.

# def main : IO UInt32 := do

# IO.println "hello"

# IO.println "world"

# return 0

#check IO.println "hello"

-- IO Unit

#print main

-- Output contains the infix operator `>>=` and `pure`

-- The following `set_option` disables notation such as `>>=` in the output

set_option pp.notation false in

#print main

-- Output contains `bind` and `pure`

#print bind

-- bind : {m : Type u → Type v} → [self : Bind m] → {α β : Type u} →

-- m α → (α → m β) → m β

#print pure

-- pure : {m : Type u → Type v} → [self : Pure m] → {α : Type u} →

-- α → m α

-- IO implements the type classes `Bind` and `Pure`.

#check (inferInstance : Bind IO)

#check (inferInstance : Pure IO)

The types of bind and pure may look daunting at first sight.

They both have many implicit arguments. Let's focus first on the explicit arguments.

bind has two explicit arguments m α and α → m β. The first one should

be viewed as an action with effects m and producing a value of type α.

The second is a function that takes a value of type α and produces an action

with effects m and a value of type β. The result is m β. The method bind is composing

these two actions. We often say bind is an abstract semicolon. The method pure converts

a value α into an action that produces an action m α.

Here is the same function being defined using bind and pure without the do DSL.

def main : IO UInt32 :=

bind (IO.println "hello") fun _ =>

bind (IO.println "world") fun _ =>

pure 0

The notations let x <- action1; action2 and let x ← action1; action2 are just syntax sugar for bind action1 fun x => action2.

Here is a small example using it.

def isGreaterThan0 (x : Nat) : IO Bool := do

IO.println s!"value: {x}"

return x > 0

def f (x : Nat) : IO Unit := do

let c <- isGreaterThan0 x

if c then

IO.println s!"{x} is greater than 0"

else

pure ()

#eval f 10

-- value: 10

-- 10 is greater than 0

Nested actions